Application Security , DevSecOps , Endpoint Security

ENISA Highlights AI Security Risks for Autonomous Cars

Automakers Should Employ Security-By-Design to Thwart Cyber Risks

Autonomous vehicle manufacturers are advised to adopt security-by-design models to mitigate cybersecurity risks, as artificial intelligence is susceptible to evasion and poisoning attacks, says a new ENISA report.

See Also: Scalability - A Look at Securely Managing 500 Million Connected Vehicles

The study from the European Union Agency for Cybersecurity and the European Commission's Joint Research Centre warns that autonomous cars are susceptible to both unintentional harm, caused by existing vulnerabilities in the hardware and software components of the cars, and intentional misuse, where attacks can introduce new vulnerabilities for further compromise.

Artificial intelligence models are also described as susceptible to evasion and poisoning attacks where attackers manipulate what is fed into the AI systems to alter the outcomes.

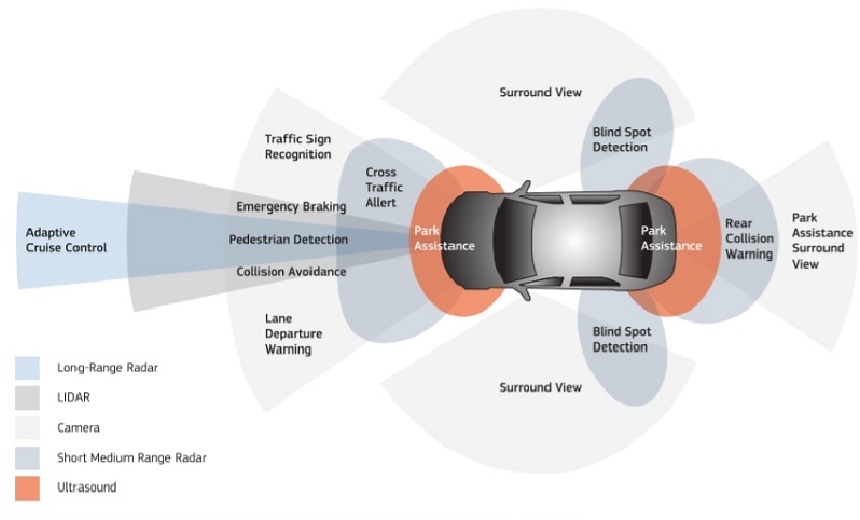

As a result, the report notes autonomous cars are vulnerable to potential distributed denial-of-service attacks and various other threats associated with their many sensors, controls and connection mechanisms.

"The growing use of AI to automate decision-making in a diversity of sectors exposes digital systems to cyberattacks that can take advantage of the flaws and vulnerabilities of AI and ML methods," the report notes. "Since AI systems tend to be involved in high-stake decisions, successful cyberattacks against them can have serious impacts. AI can also act as an enabler for cybercriminals."

Perceived Threats

Likely threats identified by the report include:

- Sensor jamming: Attackers can blind or jam sensors used in autonomous cars by altering the AI algorithms after gaining access to its systems by leveraging exploits. This way attackers can feed the algorithms with wrong data to diminish the effect of automated decision making.

- DDoS attacks: Hackers can disrupt the communication channels available to the vehicle to hinder operations needed for autonomous driving.

- Exposed data: Due to the abundance of information stored and utilized by vehicles for the purpose of autonomous driving, attackers can leverage vulnerabilities to access and expose user data.

Recommendations

The report provides several recommendations for automakers to implement to avoid attacks against autonomous cars; these include:

- Systematic security validation of AI models and data: Since autonomous cars collect large amounts of data such as input from multiple sensors, ENISA recommends that automakers should systematically monitor and conduct risk assessment processes for the AI models and their AI algorithms.

- Address supply chain challenges related to AI cybersecurity: Automakers should ensure compliance with AI security regulations across their supply chain by involving and sharing responsibility between stakeholders as diverse as developers, manufacturers, providers, vendors, aftermarket support operators, end users, or third-party providers of online services. They should be aware of the difficulty of tracing open source assets, with pre-trained models available online and widely used in ML systems, without guarantee of their origin. It is advised to use secure embedded components to perform the most critical AI functions.

- Develop incident handling and response plans: A clear and established cybersecurity incident handling and response plan should be considered, taking into account the increased number of digital components in the vehicle and, in particular, the ones based on AI. Automakers are advised to develop simulated attacks and establish mandatory standards for AI security incidents reporting. They should organize disaster drills, involving high management, so that they understand the potential impact in case a vulnerability is discovered.

- Build AI cybersecurity knowledge among developers and system designers: Shortage of specialist skills hampers the integration of security in the automotive sector, so it is recommended that AI cybersecurity be integrated into the whole organization policy. Also, diverse teams should be created consisting of experts from ML-related fields, cybersecurity and the automotive sector, including mentors to assist the adoption of AI security practices. For the longer term, bring industry expertise to the academic curriculum by welcoming lead people in the field to guest lectures or by defining special courses that tackle this topic.

Past Attacks

The extent of the challenge for the automotive sector to implement AI security by design was laid bare by the recall during February of nearly 1.3 million Mercedes-Benz cars in the U.S due to a problem in their emergency communication module software. "They had to recall cars manufactured since 2016, which means that there was no proper testing plan for this feature for almost 5 years," Wissam Al Adany, CIO of automotive company Ghabbourauto in Egypt, told Information Security Media Group.

While attacks targeting physical autonomous cars remain relatively few, security researchers have successfully compromised various models of Tesla vehicles.

In November 2020, researchers from Belgium's University of Leuven - aka KU Leuven - found that they could clone a Tesla Model X driver's wireless key fob, and about two minutes later drive away with the car. A demonstration video posted by the researchers also suggests such an attack could be stealthy, potentially leaving a stolen car’s owner unaware of what was happening (see: Gone in 120 Seconds: Flaws Enable Theft of Tesla Model X).

In October 2020, researchers from Israel’s Ben-Gurion University of the Negev demonstrated how some autopilot systems from Tesla can be tricked into reacting after seeing split-second images or projections (see: Tesla's Autopilot Tricked by Split-Second 'Phantom' Images).

In yet another case, an independent security researcher uncovered a cross-site scripting vulnerability in Tesla 3 that could enable attackers to access the model's vitals endpoint that contained information about other car (see: How a Big Rock Revealed a Tesla XSS Vulnerability).